The polarity of AI and the designer’s role.

Written by Marihum Pernia

More than a decade after the onset of the Fourth Industrial Revolution, technologies such as artificial intelligence, machine learning, and blockchain have fundamentally altered how humans consume, behave, and interact. In response, organizations have been compelled to reassess their value propositions, pace of innovation, and technological capabilities. Market demand has shifted from a product-centric approach to one that is more experiential, service-oriented, and hyper-personalized. New economic models have emerged, challenging traditional business practices. The rapid advancement of technology now tests our everyday interactions, business models, society, and even the planet itself.

We are currently living in a fascinating yet complex era. While technological advancements have opened up new opportunities, they have also paradoxically introduced significant risks. Technological systems mirror the values, beliefs, and biases of their creators and users. AI, in particular, serves as a reflection of our current truths as individuals and as a society, simultaneously urging us to embrace responsibility, accountability, reflection, and action. These systems are influenced by the data they are trained on, the algorithms they utilize, the goals they pursue, and the contexts in which they operate—all shaped by human choices and judgments, whether explicit or implicit, conscious or unconscious, intentional or unintentional. As a result, AI systems can offer insights into both our individual and collective selves.

Source: Images sourced from Pinterest and slides from labcoexist.

The Opportunity of AI

The opportunities presented by AI are vast and transformative, reshaping how people engage with the world and revolutionizing everyday experiences across various domains. This era is marked by unprecedented innovation, with advancements in technology giving rise to novel services, products, and interactions. Big tech companies like OpenAI, Meta, Microsoft, Apple, Google and Tesla are at the forefront of this technological revolution, driving significant changes in how we live, work, and communicate.

These companies continuously introduce innovations aimed at improving human life, enhancing work, daily experiences, home environments, and communication methods. The rapid pace of these changes is remarkable, resulting from years of research and development across various technological verticals.

The rise of technological trends such as: Automation is reshaping interactions, and the roles assigned to machines continue to expand. Robots and IoT systems are replacing human workers in a variety of contexts. The blending of virtual and physical realities is also becoming a major trend across multiple domains—whether in home settings, gaming, or professional environments. Autonomous vehicles are being used for food delivery and transportation, seamlessly integrating into this hybrid reality. The rise of robots in urban environments introduces further implications and understanding on how we coexist with them in urban life, also, Robots are forming emotional bonds with children and the elderly, while also taking on roles in factories, businesses, and homes as automated servants. Collaboration with machines is evolving thanks to generative AI, with technology now extending human cognition and thinking. AI-powered tools enable rapid, efficient output creation. The rise of digital agents will empower processes and services in exponential ways, and they will be able to create in rapid ways prototypes of our digital twins in the cloud thanks to the amount of data they are going to handle.

In today's evolving landscape, design has become a multifaceted practice. Designers now play diverse roles, from framing innovation strategies to orchestrating interconnected systems. They support organizational ecosystems, manage complexity, and advocate for collaboration.

The integration of AI into design presents two key challenges for designers. First, they must acquire technological literacy to effectively support the envisioning and development AI-powered products, services and interactions. Second, they need to learn how to collaborate with AI systems, establishing a co-creation process between human and machine. This requires designers to understand concepts like generative design, natural language processing (NLP), large language models (LLMs), and neural networks. For many, this technological shift has been a significant hurdle, necessitating familiarity with new concepts and processes.

Ultimately, this transformation requires designers to reimagine their traditional processes, accommodating AI as an active participant and fundamentally reshaping their approach to work.

The Opportunity of AI - Cases

Tesla Optimus, also known as Tesla Bot, is an AI-powered bipedal humanoid robot intended for general-purpose use. It's designed to handle dangerous, repetitive, or boring tasks that humans prefer not to do. The robot is part of Tesla's vision to create a future where physical work is a choice, not a necessity.

Ping An Good Doctor provides online consultations with AI-assisted medical teams and integrates seamlessly with offline medical services within the ecosystem. Users can search for basic information for free, with consultations and treatments available at a cost.

Stop and Shop, in partnership with Robomart, has introduced an autonomous grocery delivery service. This innovative system brings a mobile, self-driving store directly to customers' homes. The autonomous vehicle is essentially a miniature supermarket on wheels, allowing customers to summon groceries to their location.

NVIDIA’s Omniverse platform. This collaboration allows BMW to simulate and optimize various aspects of factory design and operations in real-time, using advanced 3D visualization, AI, and robotics.

The Apple Vision Pro is a mixed reality headset introduced by Apple, combining augmented reality (AR) and virtual reality (VR) into a single device. It marks Apple’s first foray into spatial computing, creating an immersive experience where users can interact with both digital content and their physical environment.

Hyundai Metamobility is a concept by Hyundai Motor Company that integrates mobility into the metaverse, enhancing interaction between physical and digital worlds. It aims to redefine transportation by merging real and virtual realms through robotics, autonomous systems, and smart mobility technology.

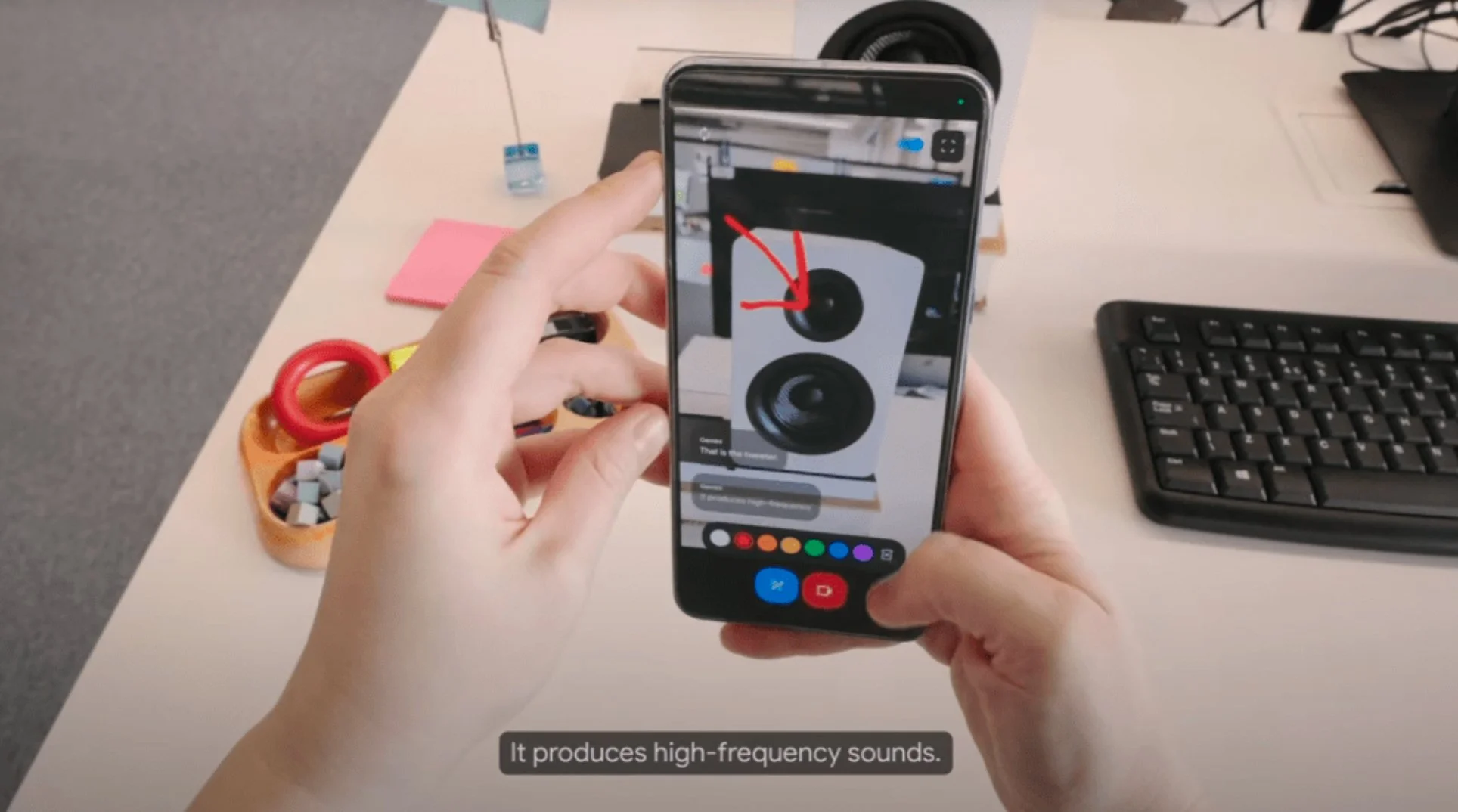

Project Astra is an AI research project by Google DeepMind that aims to create a more helpful and contextually aware AI assistant. It's designed to understand and interact with the world in a more human-like way by using multiple input methods like speech, vision, and even potentially other senses in the future.

ABBA Voyage is an innovative virtual concert experience featuring digital avatars of ABBA. This project blends advanced technology with live performances, allowing fans to see ABBA's younger selves perform their greatest hits in a custom-built London venue.

The Harm of AI

AI as a Megamachine (On Infrastructure)

Understanding the harm AI can cause requires constant zooming in and out—to grasp why and how biases emerge, and to examine the supporting infrastructure. AI infrastructure reveals systemic inequality in the structuring, governance, and ownership of these technologies. This centralization extends beyond the technology itself to the assets and resources that support it.

AI-related harm includes potentially exploitative practices. This exploitation manifests in land use, concentrated ownership among certain entities and stakeholders, and geopolitical tensions. The management of cloud infrastructure exemplifies these issues. The mining of materials for AI-powered products, including servers and components, involves labor exploitation and new forms of exploitative work practices. Algorithm management, driven by vast amounts of data, raises ethical concerns. AI requires significant data to produce effective results, often collected without proper legal permissions from individuals, societies, and governments. This creates a paradox where AI's capabilities depend on data obtained through questionable means, highlighting the lack of sufficient ethical oversight in AI infrastructure development.

AI as a Discriminatory System (On Bias)

AI systems operate on two fundamental dimensions: the data they are trained on and the algorithms they use to pursue goals. The context in which these systems are managed is also crucial.

Modern machine learning models, especially in advanced AI systems, are non-deterministic. They can produce varying results based on data changes or slight alterations in the training process, adding complexity and unpredictability. This variability challenges the assurance of consistent, reliable outcomes. The "black box" nature of AI models further compounds this unpredictability. These systems process data through complex mathematical operations, often producing outputs that are difficult to explain fully. Concepts like explainability and interpretability have emerged to address this, allowing retrospective analysis of these complex systems. However, this field is still in its early stages.

Ownership and management of AI processes primarily rest with tech professionals such as data experts, data scientists, and developers. This concentration of control raises important questions about balancing technological advancement with ethical considerations.

AI systems rely on vast amounts of data, raising critical questions about data production, ownership, and usage permissions. Big tech companies often control significant portions of this data, shifting the concept of human capital value from physical contributions to society towards the value generated by our data in the cloud. The data fueling AI systems is not neutral; it reflects individual and societal values, including unconscious biases and societal patterns. These embedded perspectives significantly influence AI model outcomes, potentially perpetuating or amplifying existing biases.

Evaluating bias in AI development is crucial. We must question who determines what is valuable or unbiased, and what societal principles guide these assessments. Technology is not a neutral tool; it carries the imprints of its creators' values and biases. This human factor is critical in understanding and addressing AI's far-reaching impact, emphasizing the need for diverse perspectives and ethical considerations in AI development and deployment.

AI as a Challenger of Information (On Intellectual Property, Deep Fakes and Disinformation and misinformation)

AI, particularly Generative AI (GenAI), presents challenges in three key areas: misinformation/disinformation, intellectual property, and deepfakes. GenAI's ability to create convincing yet false content, including news articles and scientific papers, raises concerns about the spread of misleading information. Additionally, the use of scraped internet data for AI training has sparked debates about plagiarism and copyright infringement, while the ownership of AI-generated content remains a contentious issue.

Deepfakes, hyper-realistic fabricated images or videos, pose significant ethical concerns, especially when used maliciously against public figures or artists. These issues collectively underscore the need for responsible use of AI technologies. Organizations and professionals must consider the ethical implications of AI-generated content and take accountability for its deployment and potential consequences.

The Harm of AI - Cases

“Mortgage algorithms perpetuate racial bias in lending, study finds"

A study from UC Berkeley found that both online and in-person mortgage lenders charge higher rates to Black and Latino borrowers, costing these groups up to $500 million more annually in interest. The issue stems from biased algorithms, which are intended to automate lending decisions but often reflect existing inequalities.

“Exclusive: Open AI used kenyan workers on less than 2 dollar per hour to make chatgpt less toxic”

OpenAI, the company behind ChatGPT, outsourced data labeling work related to the AI model's development to Kenyan workers. These workers were paid less than $2 per hour and were exposed to disturbing content while labeling data. This practice has raised concerns about ethical labor practices and the exploitation of workers in the tech industry.

“My AI Is Sexually Harassing Me: Replika Users Say the Chatbot Has Gotten Way Too Horny”

Users of the AI chatbot Replika have reported experiencing sexual harassment from their AI companions. Initially designed for emotional support, the AI has been accused of initiating unwanted sexual conversations. Users describe the chatbot sending explicit messages without prompting, leading to discomfort and frustration.

“New York Times sues OpenAI and Microsoft for copyright infringement”

The New York Times filed a lawsuit against OpenAI and Microsoft, claiming that the companies used millions of its articles to train AI models like ChatGPT without permission. The lawsuit, submitted in December 2023, alleges that the AI systems have effectively "free-ridden" on the substantial investments the Times has made in journalism by using its content to create competing products.

“Dear Taylor Swift, we’re sorry about those explicit deepfakes”

In January 2024, AI-generated deepfake images of Taylor Swift spread on social media, raising concerns about sexual exploitation and the need for stricter regulations. The incident, which primarily affected platforms like 4chan and X, highlighted the disproportionate impact of deepfake technology on women and prompted calls for new legislation to address non-consensual content creation and distribution.

Source: Images sourced from Pinterest and slides from labcoexist.

An examination of AI's opportunities and potential risks reveals a complex, systemic issue. While understanding the technical aspects of AI is crucial, it's equally important to consider the supporting infrastructure and its broader impacts on products, services, experiences, society, and the environment. This paper focuses on the critical issue of bias in AI systems and its potential harmful effects. We investigate how bias manifests throughout the AI development lifecycle, from the strategy/envisioning to deployment. Our aim is to highlight the importance of designers gaining a comprehensive understanding of this process, particularly in relation to bias mitigation.

Addressing bias and fairness in AI requires integrating social values. We must understand which values impact society positively or negatively today. This calls for a human-centered approach that goes beyond traditional user-centric models, prioritizing marginalized communities. These communities offer valuable insights into current social justice and values. Placing marginalized communities at the center of analysis during design and AI development phases can help address bias more effectively. We should adopt intersectional principles, recognizing that bias stems from interconnected characteristics rather than isolated traits.

To achieve real change, we need to expand cross-disciplinary collaboration and adopt community-driven design processes. This involves engaging a broader range of stakeholders and moving beyond traditional processes. Creating a coalition of people, disciplines, and communities is challenging but necessary. We must explore ways to achieve this collaborative approach.

This reflection raises these questions: how can we continuously reinvent ourselves as designers within this rapidly changing context? How can we redefine our purpose and challenge our traditional frameworks, methods, and tools to create a fairer AI landscape?

Ultimately, we must commit to reframing our approaches to be more AI-driven, but also more equitable.

Data, The opportunity and complexity

The core process of managing data and AI models.

Designers must recognize that artificial intelligence and generative AI systems are fundamentally built on data. This seemingly intangible element is, in fact, the most concrete and -tangible-component in every AI-driven model. Managing data involves a complex pipeline of processes, each critical to the AI model's success.

There are different phases that describe the processes of managing data for an AI model. The data process begins with Data extraction, where sources such as databases, websites, APIs, sensors, and social media are identified and utilized. It's essential to determine the required data types and sources at the project's outset and assess additional needs during development. The quality and relevance of this data directly influence the model's performance. Data validation ensures the collected data is accurate, consistent, and appropriate for the model's intended use. This step involves detecting errors, inconsistencies, and missing values that could negatively impact the learning process. Validation must be comprehensive, combining technical accuracy with strategic and human-centered perspectives to ensure the final output is not only correct but also inclusive and fair. Data preparation transforms raw data into clean, structured information that the AI model can efficiently process. This includes normalizing, standardizing, removing duplicates, and handling missing values. The AI model's success depends on both the quantity and quality of the data, as well as how well it is structured and cleaned.

The development process continues with Model training, where the AI system learns from the prepared data, adjusting its internal parameters to minimize errors and make accurate predictions. Model evaluation follows, assessing the model's performance using separate test data. Model validation then fine-tunes the model, testing it on a validation dataset to ensure it generalizes well to unseen data and performs consistently across different scenarios. Model monitoring occurs post-deployment, tracking the model's performance to maintain accuracy and relevance as new data becomes available or real-world conditions change. This ongoing process is essential for preventing performance degradation and biases, ensuring the long-term success of the AI system.

By fully engaging in this process, designers can envision their potential contributions, activate collaborations, and bring unique perspectives at each phase. This engagement also facilitates the establishment of a common language with professionals from different disciplines involved in the process. Such a holistic approach not only influences the success of the AI model of a product, service or experience but also addresses foundational issues that can lead to various types of harm, such as bias. These issues often arise due to inconsistencies or a narrow view of the development process. Key questions for designers to consider include: Should we monitor data from different sources to determine its relevance for the intended experience or product? Should we collaborate with tech developers and data experts to better understand potential inconsistencies or issues in the data? How can we establish principles or requirements during the data preparation phase to ensure the success of model training, evaluation, and monitoring? How can designers be accountable for the output and its potential impacts?

It is evident that data plays a crucial role in shaping the product, experience, or service. This research, therefore, aims to explore how designers can collaborate and integrate with other disciplines and processes to ensure effectiveness, success, fairness, and ethical AI outcomes.

Source: Images sourced from labcoexist.

The Ethics and Fairness of AI

Ethics and fairness in AI encompass various subjects aimed at making AI models more ethical, fair, and trustworthy. The study of these topics involves different dimensions, including regulations, frameworks, moral principles, and social justice values that must be incorporated into various stages of AI development and deployment.

Key concepts in this ethical AI context include Trustworthy AI, encompassing principles and practices for ethical and responsible AI development and use. The European Union's guidelines for trustworthy AI highlight dimensions such as accountability, human agency and oversight, technical robustness and safety, privacy and data governance, transparency, diversity, non-discrimination and fairness, and societal and environmental well-being.

The AI Act, a legislative proposal by the European Union, focuses on regulating AI systems within EU member states, emphasizing accountability and regulation for entities developing AI systems. Ethics, broadly, refers to moral principles guiding human behavior, defining right and wrong, good and bad, influenced by cultural norms, religious beliefs, and personal values. It plays a significant role in overseeing AI development. The Design Justice Network, as discussed in Sasha Costanza-Chock's book, offers a critical view of design, prioritizing social justice, equity, and the needs of marginalized communities for a more inclusive and just technological future. There are other proposals, and some are already being implemented, that challenge the current dominant processes and frameworks. These initiatives promote alternative AI ecosystems where communities collaborate with developers to build task-specific models tailored to their needs, rather than relying on general-purpose models from big tech companies that claim to solve a wide range of problems. These emerging approaches prioritize ethics by focusing on specificity and intentionality in addressing particular issues, rather than attempting to be all-encompassing.

A key issue in the ethics and AI discussion is identifying the perspective to adopt. This can range from a narrow, vertical focus on specific issues like racial or gender discrimination, to a broader context considering how these discriminatory practices intersect with AI. While AI tends to operate on a generalist level, tackling specific forms of discrimination may require a more targeted approach, considering the context of AI system deployment. Given the complexity surrounding these issues, we, as designers should strive to understand them as much as possible. The focus should be on acknowledging these topics and identifying where to direct attention and energy, based on the context, values at stake, and the end users of the AI product, service, or experience.

Source: Images sourced from Pinterest and slides from labcoexist.

The Transformation and Hybridization of the Design Process

The design discipline has undergone continuous transformation and will continue to evolve, driven by emerging technological paradigms and innovations. This ongoing metamorphosis is crucial to recognize. While the foundational aspects of design remain vital, we may need to acquire, enhance, or adapt specific skills to thrive in a context increasingly shaped by technologies like AI. Embracing this change is essential for designers to stay relevant and effective in this dynamic landscape.

A systemic approach is crucial. Designers must address the complex interplay between AI, its opportunities, and potential risks by incorporating diverse expertise, viewpoints, and skills. Guided by the principle of transdisciplinarity, we should form a coalition of individuals, disciplines, entities, stakeholders, and communities. The call to action for designers is to tackle this complex interplay through a transdisciplinary approach, creating common languages as needed. Critical thinking is another vital factor in this process, fostering societal awareness of future technological paradigms and their potential societal and environmental impacts.

Another key aspect is understanding and reflecting on our current design processes, and how these approaches and methods can evolve. We also need to comprehend how various processes and contexts—such as AI development, which is primarily driven by tech experts and data specialists—can be integrated into our work. It is crucial for designers to start exploring: How can AI development from both design and tech/data-driven perspectives backfeed each other? How can designers bring their approach into the AI context, and vice versa? a bold approach is needed.

In the following section, you will find a proposal of a wide a range of dimensions that designers should consider, question, and potentially adapt to current approaches, frameworks, and methodologies. This is not a toolkit or a framework, but rather a intention to broaden our perspective and begin understanding how we can influence and bring value in technological/AI-driven context.

Process <—> Lenses

Transdisciplinary principles are crucial in this context, as the project demands diverse disciplines to analyze the complexity from multiple perspectives. This complexity offers opportunities, and the goal is to apply critical thinking while fostering new dialogues and common languages among various actors.

A key aspect of this process is its continuity from inception through implementation and beyond. While the relevance of different stakeholders may shift, their full engagement at all stages is essential. To achieve this, we must first identify which disciplines need to collaborate closely. At a macro level, this includes experts in technology, data, philosophy, design, and law. Within these broad categories, we can identify specific capabilities that should intersect in the design process, including philosophers, anthropologists, strategic designers, interaction designers, tech experts, data scientists, data strategists, developers, lawyers, and business experts.

By ensuring all these stakeholders collaborate throughout the process, we can consider the AI output from multiple angles. This encompasses the technical development of AI, its value proposition and business impact, and the diverse perspectives and questions that may arise. Simultaneously, we will orchestrate and design optimal interactions while managing data responsibly. In this scenario, a designer's most valuable collaborators could be a philosopher, a data scientist, or even a lawyer.

Impact <—> Stakeholders

This section delves into the shifting paradigm of stakeholder identification in technological design. It emphasizes the need to broaden our perspective beyond direct users, considering a wide array of individuals and entities impacted by new technologies. The core concept is to define stakeholders based on their interactions with the technology rather than fixed identities, allowing for a more fluid and comprehensive understanding of technological impact.

In this expanded view, stakeholders encompass anyone affected by a new system, whether directly or indirectly. This includes not just end-users and developers, but also extends to diverse groups, communities, organizations, and even non-human entities. The definition is expansive enough to consider impacts on past and future generations, as well as on cultural and environmental elements like historic sites or ecosystems. This approach acknowledges that an individual's relationship with technology can be multifaceted and evolve over time. By adopting this broader definition, designers are challenged to systematically consider and legitimize a more diverse set of stakeholder groups, requiring a robust analytical or empirical justification for their inclusion. This process aims to create a more inclusive, comprehensive, and ethically sound design approach that accounts for the far-reaching effects of technological innovations.

Characteristics <—> Intersectionality

When designing AI-driven products or services, we should move beyond traditional persona approaches to mitigate embedded biases. An intersectional perspective offers a richer understanding of user experiences, acknowledging the interplay of factors such as age, gender, ethnicity, socioeconomic status, education, and physical ability. This interconnected approach enables the creation of more inclusive and empowering AI solutions that capture the complexity of human identities.

Adopting intersectionality in AI design aligns with broader social justice principles, fostering more equitable solutions. This framework recognizes how various identity aspects collectively shape an individual's experiences, allowing us to address the multifaceted nature of user needs. As a result, this approach leads to AI-powered products and services that are not only more inclusive and effective but also better equipped to serve diverse populations and address potential biases inherent in technological solutions. We can start tackling biases from the beginning of the envisioning process.

Journey <—> Data

Customer journeys typically include progressive steps, relevant touchpoints, and backstage processes. We map out the experience of different kinds of users. With AI and mainstream technologies, it's crucial to view these journeys through a new lens.

The first point to address is the growing number of technologies available today—artificial intelligence, machine learning, IoT, augmented reality, NFTs, generative AI, and so on. The range of technology is constantly expanding. It’s essential to understand these technologies, how they work, and how they can be integrated holistically into the customer journeys we are designing for end users. It’s important to acknowledge that these new interactions and experiences, empowered by AI or other technologies, often involve more than one technology working in tandem. For example, IoT is frequently linked with AI, machine learning, or even blockchain, and this combination creates a unique experience. However, there’s a second layer to consider: how these technologies coexist holistically within the journeys and experiences we create. This brings us to data.

Data, as we know, is the engine of AI-driven products, services, and experiences. It’s important to understand the role of data throughout the AI development process. When designing a product, service, or experience, one of the first questions designers should ask themselves is: What data is needed to ensure a successful experience? Understanding the source and relevance of data becomes critical. Knowing where the data comes from—whether it’s purchased, public, organizational, or sourced from social networks—helps in determining the quality and relevance of that foundational data, which is essential for AI systems. Another key question designers should ask is: What kind of experience or output will this data generate in an AI model? It’s vital to first understand whether the AI model is deterministic (rule-based) or non-deterministic (involving machine learning). With a deterministic model, we know the final output because it follows set rules. With non-deterministic models, like those using machine learning, the output may be unpredictable, as the model generates new patterns based on the data it processes. These patterns might be unknown or unexpected due to the complex algorithms and behaviors of different users interacting with the product or service.

Designers need to recognize that if they choose the non-deterministic path, which often leads to personalization, the AI will create new information or predictions that could enhance the user experience. The key is to anticipate how these outputs could be useful to the user and incorporate that into the overall design. Another crucial consideration is the AI "black box"—the hidden processes where data, algorithms, and operations work behind the scenes to produce outcomes. Designers must ensure that throughout this process—from data extraction to validation and preparation—the system should upholds ethical standards, fostering fairness and transparency in the resulting experiences.

Algorithm <—> Curate

A key question arises when we consider AI models that are deeply embedded in products, services, and experiences: how do we manage the fact that the more these models are exposed to diverse users and contexts, the more they evolve and transform over time? This creates a scenario where the algorithm is in a state of continuous evolution. In this context, it’s crucial to reflect on the fact that algorithms, from the outset, may carry inherent biases. So, what role does the designer play in ensuring that the user experience remains successful and fair for a variety of users and contexts, given that the algorithm's evolution directly impacts the success of that experience?. The evolution of an algorithm is tightly linked to the success or failure of the experience it powers. This raises another important question: Who is responsible for overseeing this ongoing evolution and transformation of the algorithm over time? Should designers play a role in curating the algorithm's evolution after its implementation? Should they collaborate with other disciplines and experts to continually assess and address biases within the system to ensure the user experience remains optimal?

It’s important to recognize that AI models are prone to bias, which can manifest at various stages and in different forms. The degree and nature of this bias depend on multiple factors, such as how data is sourced and processed, how the algorithm is designed, and the context in which it is applied. There are several types of bias that can impact AI systems:

Pre-existing bias: These biases stem from societal contexts and are brought into the machine. They reflect historical and cultural inequalities or assumptions that already exist in the data or in the system’s design. Such biases often arise from the creators of the system or from using data that mirrors imbalanced social structures.

Technical bias: This bias arises from the technical limitations or architecture of the system. The way data is processed, or the algorithm is structured, can introduce biases if the system isn’t designed with care. Technical biases may inadvertently favor certain outcomes or groups, leading to skewed results.

Emergent bias: This type of bias occurs as the system's context changes, or when new, previously unconsidered users interact with it. Over time, as more diverse groups begin using the system, new biases can emerge that weren’t initially accounted for. This often happens when the model fails to adapt to a broader set of users or environmental changes.

Given the possibility of these biases, designers must reflect on their role in the post-implementation phase of AI-driven systems. Traditionally, designers focus on the initial creation of a product or service, but with AI, the role may need to extend further. Designers may need to have a role in the responsibility of curating and monitoring the algorithm's behavior and evolution to ensure it remains aligned with the intended user experience.

A key consideration is that this is not a task designers can undertake alone. It requires collaboration across disciplines, involving data scientists, engineers, ethicists, and other stakeholders. Designers should be involved in asking critical questions about how the AI system is evolving: What new biases might be emerging? Who is included? How can we mitigate them? What data needs to be continuously incorporated to ensure the system adapts fairly and effectively? Designers may also play a role in ensuring that feedback loops are in place, where real-time data from user interactions can inform ongoing refinements of the system.

In addition, there is a growing call for designers to help establish governance mechanisms that oversee AI models, ensuring they operate transparently and responsibly. This might include creating interfaces or tools that allow for the monitoring of AI systems, identifying problematic outputs, and allowing users to provide feedback when biases are detected.

The designer's role in AI-driven experiences does not end with implementation. Given the continuous evolution of algorithms and the potential for bias, designers should contribute to ongoing oversight and refinement. They should work collaboratively with other experts to ensure that AI systems adapt to changing contexts, remain fair, and continue to deliver successful experiences for diverse users over time.

Outro

The role of designers in an AI-driven, technological context is evolving rapidly, bringing new responsibilities and transforming the profession itself. While designers should strive to preserve the foundations of their discipline, they must also continuously assess AI's impact from multiple perspectives and understand the ongoing transformation of their field—embracing and adapting to these changes. This assessment includes considering market impact, technological innovation, data ethics, bias mitigation, harm reduction, and sustainability.

A key challenge for designers in this context is developing personalized, interconnected ecosystems using mainstream technologies. This requires expanding skill sets, learning new terminologies and processes, and broadening our understanding of user personas. Designers must now consider a wider ecosystem of stakeholders—from individuals to organizations, and even the planet itself. Additionally, reimagining the user journey to incorporate a diverse range of AI-related technologies and data flows is essential. Designers should also recognize the importance of supporting the orchestration processes for AI models. This extends beyond traditional design practices and involves managing complex backstage processes that underpin AI-driven experiences, ultimately ensuring efficient outcomes.

The rapid transformation of user interactions necessitates designing multimodal experiences, incorporating not only traditional interfaces but also conversational ones, such as text, audio, or video-based interactions. This expansion presents both challenges and opportunities for innovation in everyday interactions.

The integration of machine and human cognition in design processes opens up new frontiers. Generative AI and Large Language Models (LLMs) offer powerful tools for creativity and problem-solving. These technologies can enhance cognitive capabilities and provide novel forms of interaction, but they also have limitations—including the potential for "hallucinations" or factual errors. Human oversight remains crucial for ensuring contextual appropriateness, objectivity, and ethical judgment. Designers must explore collaborative approaches with AI that maintain human control and integrity. Key questions emerge: How can designers work more equitably alongside machines? What kinds of products, services, and experiences can effectively blend machine and human intelligence? How can designers foster empowering relationships between humans and AI?

Ethical considerations are paramount in AI-driven design. Designers must address the implications of algorithmic surveillance, user tracking, data collection and sale, and filter bubbles. This demands new approaches to envisioning, testing, benchmarking, and validation, prioritizing fairness throughout the AI development and design process. To tackle bias in AI development, designers should incorporate intersectional research and benchmarks from the outset. This is crucial for creating more inclusive, equitable products and services empowered by AI. Additionally, designers must anticipate potential pitfalls during the AI envisioning phase, considering unexpected outcomes to preemptively address challenges.

An important responsibility for designers is setting realistic expectations for AI-powered services and products. While ambitious visions are often promoted, designers must bridge the gap between these expectations and the current capabilities of AI, ensuring that clients and users have a clear understanding of what's possible.

Ultimately, designers have a crucial role in fostering a harmonious coexistence between humans, algorithms, and the environment. This involves not only creating functional and aesthetically pleasing products but also considering the broader ethical, social, and environmental implications of AI-driven experiences. By embracing these new roles, expanding their expertise, and collaborating across disciplines, designers can shape AI experiences in ways that are beneficial, equitable, and sustainable for all stakeholders. In doing so, they will play a pivotal role in guiding the future of AI toward a more balanced and responsible direction, creating value in this complex and evolving technological landscape.

Source: Images sourced from Pinterest and slides from labcoexist.

References

Friedman, B., & Hendry, D. G. (2019). Value sensitive design: Shaping technology with moral imagination. MIT Press.

Costanza-Chock, S. (2020). Design justice: Community-led practices to build the worlds we need. MIT Press.

Varon, J., Costanza-Chock, S., Gebru, T., (2024). Fostering a federated AI commons ecosystem. Federation of American Scientists.

Crawford, K. (2021). Atlas of AI: Power, politics, and the planetary costs of artificial

Clark, A., & Chalmers, D. (1998). The extended mind. Analysis, 58(1), 7-19. https://doi.org/10.1093/analys/58.1.7

Sunshine, L. (2017). Bakhtin, theory of mind, and pedagogy: Cognitive construction of social class.

Mattin, D. (2023). Talking to ourselves. https://www.newworldsamehumans.xyz/p/talking-to-ourselves

labcoexist. (2023, October 21). Coexist: Chapter 4.1 - AI as a Mirror of Ourselves: Human & Machine Relationships. Substack. https://labcoexist.substack.com/p/coexist-chapter-41

labcoexist. (2023, October 2). Coexist: Chapter 4 - AI is a Mirror of Ourselves: On Transdisciplinarity. Substack. https://labcoexist.substack.com/p/coexist-chapter-4

labcoexist. (2023, September 18). Coexist: Chapter 2 - The Intangible Becomes Tangible. Substack. https://labcoexist.substack.com/p/coexist-chapter-2

labcoexist. (2023, September 11). Coexist: Chapter 1 - Designing in a technological context, what does it mean?. Substack. https://labcoexist.substack.com/p/coexist-chapter-1

Tesla. (n.d.). Artificial intelligence at Tesla. https://www.tesla.com/AI

Wikipedia contributors. (n.d.). Ping An Good Doctor. Wikipedia. Retrieved October 22, 2024, from https://en.wikipedia.org/wiki/Ping_An_Good_Doctor

Mashable. (2019, January 4). RoboMart and Stop & Shop partner to launch autonomous grocery delivery. https://mashable.com/article/robomart-stop-and-shop-autonomous-grocery-delivery?test_uuid=01iI2GpryXngy77uIpA3Y4B&test_variant=b

Nvidia. (n.d.). Autonomous machines in car manufacturing. https://www.nvidia.com/en-us/autonomous-machines/embedded-systems/car-manufacturing-robotics/

Apple. (2023). Apple Vision Pro. https://www.apple.com/apple-vision-pro/

CNBC. (2023, October 5). Hyundai Metamobility [Video]. YouTube. https://www.youtube.com/watch?v=mUcfPxr0X_E

Moxie Robot. (n.d.). Moxie robot for children. https://moxierobot.com

ABBA Voyage. (n.d.). The ABBA Voyage concert experience. https://abbavoyage.com/?tduid=Cj0KCQjw99e4BhDiARIsAISE7P_I3HKNYXEGwUy0zZiN4F44B9rPwckipkeEleRy_R-RpbatfIB00q0aAoUWEALw_wcB_deviceid&balocal=9199158&progId=334117&affId=653270&utm_source=tradedoubler&utm_medium=affiliate&utm_campaign=Bargainarena&gbraid=0AAAAAD-qld3LyX_EnKTkhMTkXjojeiM72&gclid=Cj0KCQjw99e4BhDiARIsAISE7P_I3HKNYXEGwUy0zZiN4F44B9rPwckipkeEleRy_R-RpbatfIB00q0aAoUWEALw_wcB

UC Berkeley. (2023, October 18). Mortgage algorithms perpetuate racial bias in lending, study finds. Berkeley News. https://vcresearch.berkeley.edu/news/mortgage-algorithms-perpetuate-racial-bias-lending-study-finds

Pilling, D. (2023, January 18). Inside the shadow workforce that helped build ChatGPT: Kenyan workers describe intense labor conditions. Time. https://time.com/6247678/openai-chatgpt-kenya-workers/

Cox, J. (2023, February 9). My AI is sexually harassing me: Replika users claim chatbot crossed the line. Vice. https://www.vice.com/en/article/my-ai-is-sexually-harassing-me-replika-chatbot-nudes/

Hern, A. (2023, December 27). New York Times sues OpenAI and Microsoft over data scraping for AI training. The Guardian. https://www.theguardian.com/media/2023/dec/27/new-york-times-openai-microsoft-lawsuit